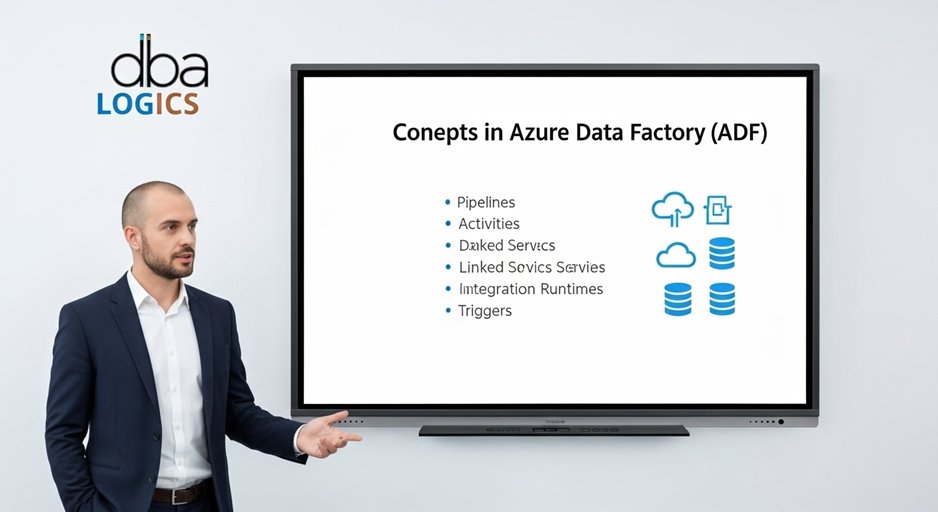

Concepts in Azure Data Factory

This is a brief overview of the key ideas behind Microsoft's cloud data integration service, Azure Data Factory (ADF). Pipelines, activities, datasets, connected services, integration runtimes, and triggers are the topics we will look at. We will illustrate the functions of each component with specific examples. It is important to comprehend these ideas while creating and managing Azure data pipelines.

1. Pipelines

1. Pipelines

A pipeline is a chain of actions, which transfer data to the next location. It is like a series of instructions lined up. Activities are constructed into a pipeline.

Example:

Consider that you need to load data in an on-premises SQL Server database, transform it and load it into Azure Data Lake Storage Gen2. You would develop a pipeline which would consist of the following activities:

- Copy Activity: retrieves the data in the SQL Server database.

- Transformation: Converts extracted data into some other form (e.g. cleaning, filtering, aggregating).

- Copy Activity: Copies the data into Azure Data Lake Storage Gen2 that has been transformed.

You can specify a dependency between these activities so that Data Flow activity can be started only after the first Copy activity was successfully completed, and the second Copy activity can be started only when the Data Flow activity was completed.

2. Activities

An activity is one activity within a pipeline. All activities work on, with or to data. Such typical operations involve replication, manipulation, or search of data.

Examples:

- Copy Activity: Duplicates data stored in a source data set to a sink one. You can paste information in different sources such as Azure Blob Storage, Amazon S3, local SQL Server and so on. As an example, you could copy log files in a particular Azure Blob Storage container to a data warehouse using a Copy Activity to analyze them.

- Data Flow Activity: Carries out a data flow which is a pictorial representation of information transformation. You can do things like complex transformations that require no code using data flows. Examples include a Data Flow that cleans customer data by deduplicating, standardizing address fields and enriching the data with 3rd party sources.

- Stored Procedure Activity: Runs a stored procedure within a data base. This is handy to do database specific things as a part of your data pipeline. As an example, you could issue a Stored Procedure Activity to update your data warehouse dimension table once new data is loaded.

- Web Activity: Calls a REST endpoint. This enables you to incorporate third party services and APIs. To give an example you might have a Web Activity that initiates a machine learning model once data is loaded into a data lake.

- Lookup Activity: Accesses information in some dataset. This can be applied when dynamically setting pipeline parameters with data values. As an example, you might use a Lookup Activity to capture the most recent file name in a folder and use that value as the source of a Copy Activity.

3. Datasets

A dataset is the source/target of data in a pipeline. A dataset refers to a physical place that data is located, like a file in Azure Blob storage or a table in Azure SQL Database. A dataset may also represent the entire data in a table or file without references to any fields or rows.

Examples:

- Azure Blob Storage Dataset: a dataset that refers to a file or folder in the Azure Blob Storage. The storage account, file path and container would be specified by you.

- Azure SQL Database Dataset: a dataset referring to a table or view in an Azure SQL Database. You would be specifying the name of the server, database name and the table name.

- Delimited Text Dataset: a dataset which is the representation of a CSV file. You would indicate the file path, column delimiter and the existence of a header row in the file.

- JSON Dataset: a dataset which represents a JSON file. You would give the path to the file and the architecture of the JSON information.

4. Linked services

Linked service is a connection to a data source or destination system. A connected service contains the configuration to access that system (e.g. Azure Storage account key or Azure SQL Database server name).

Examples:

- Azure Blob Storage Linked Service: Holds connection string to an Azure Blob Storage account.

- Azure SQL Database Linked Service: Holds the name of the server, the name of the database and the credentials of an Azure SQL Database.

- Azure Key Vault Linked Service: Links to an Azure Key Vault to keep secrets safely, Like database passwords and API keys.

- Self-Hosted Integration Runtime Linked Service: The connection to a self-hosted integration runtime, it is connected to data sources behind a firewall or on-premises.

5. Integration runtimes

The program which, literally, does the work within a pipeline is called an integration runtime. A copy of the software that runs on a particular computer is referred to as each runtime. The Azure Data factory is in control of these runtimes, and you need not bother about their functionality.

Examples:

Types of Integration Runtimes:

- Azure Integration Runtime: Execute activities in the Azure cloud. It is the default IR and it is appropriate under majority of data integration circumstances.

- Self-Hosted Integration Runtime: The activities run on-premises or within a private network. This is needed when using a data source behind a fire wall or on premises. The self-hosted IR is installed in a virtual machine or physical server in your network.

- Azure-SSIS Integration Runtime: Execute SSIS packages in Azure. It is applied in case of migration to the cloud of existing SSIS packages.

6. Triggers

A pipeline is triggered. The triggers include a data change, an HTTP request, or a schedule.

Examples:

The kind of Triggers:

- Schedule Trigger: Executes a pipeline on a fixed schedule (e.g. every day at 8 am).

- Tumbling Window Trigger: Triggers a pipeline periodically at a specified size, each period originates and terminates at a specified time. The reason this is useful is when processing data in batches.

- Event-Based Trigger: Executes a pipeline when an event gets triggered (be it a file-creation or file-update in Azure Blob Storage).

- Manual Trigger: Permits you to trigger the execution of a pipeline manually.

Conclusion:

The foundation for effectively using Azure Data Factory is knowledge of these high-level concepts: pipelines, activities, datasets, linked services, integration runtimes, and triggers. After you have mastered these elements, you will be able to design, build, and run sophisticated, scalable data integration solutions that will meet your company's data processing needs. Each of these elements is essential to the overall data flow, and their configuration enables seamless and properly maintained data transformation and flow throughout the Azure ecosystem.

Post Comment

Your email address will not be published. Required fields are marked *